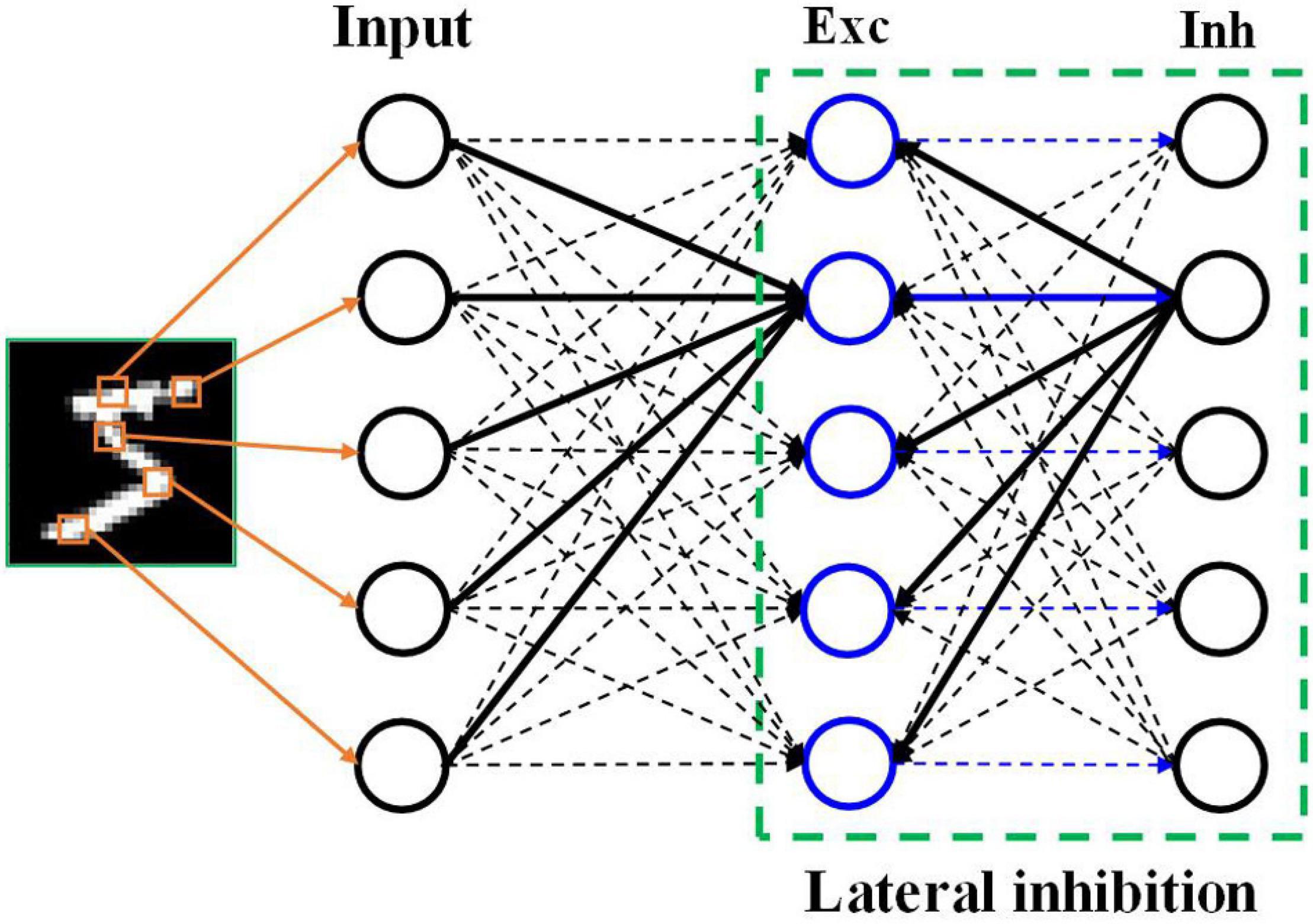

Multi-Layer Perceptron (MLP) is a fully connected hierarchical neural... | Download Scientific Diagram

PyTorch-Direct: Introducing Deep Learning Framework with GPU-Centric Data Access for Faster Large GNN Training | NVIDIA On-Demand

Make Every feature Binary: A 135B parameter sparse neural network for massively improved search relevance - Microsoft Research

Frontiers | Neural Coding in Spiking Neural Networks: A Comparative Study for Robust Neuromorphic Systems

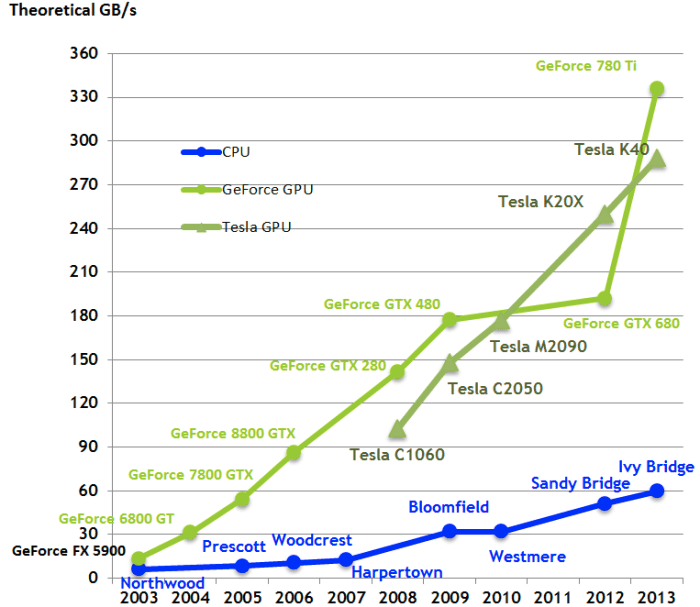

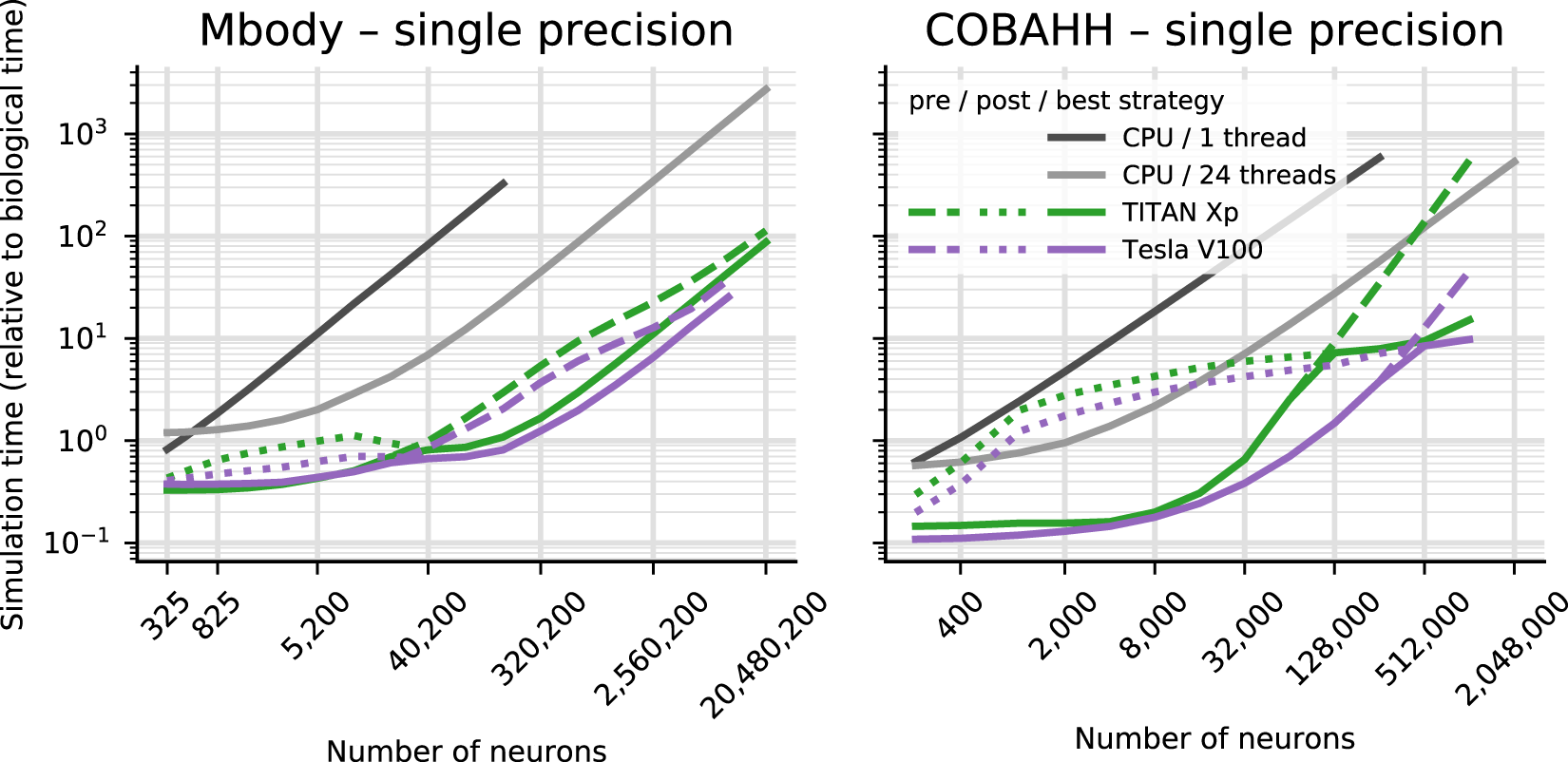

Brian2GeNN: accelerating spiking neural network simulations with graphics hardware | Scientific Reports

DeepMind, Oxford U, IDSIA, Mila & Purdue U's General Neural Algorithmic Learner Matches Task-Specific Expert Performance | Synced

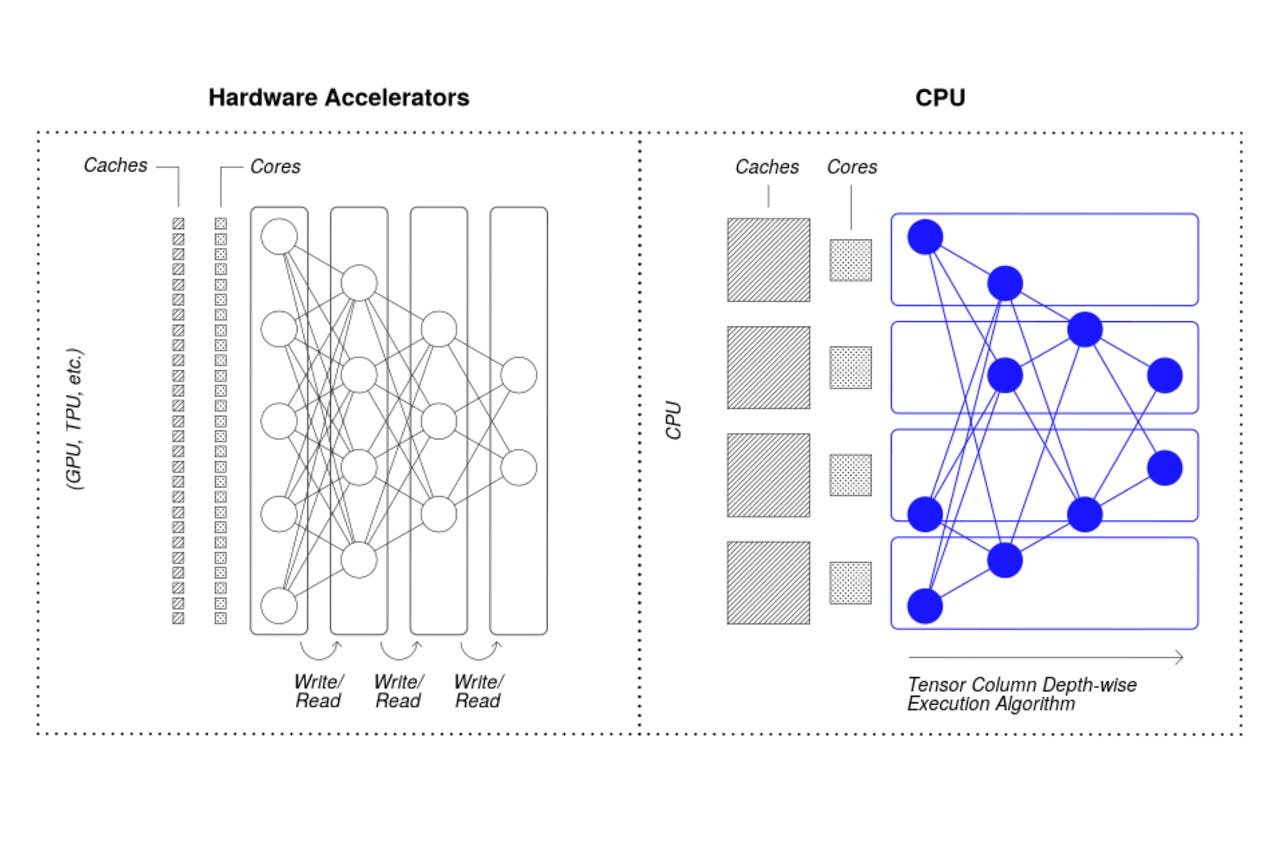

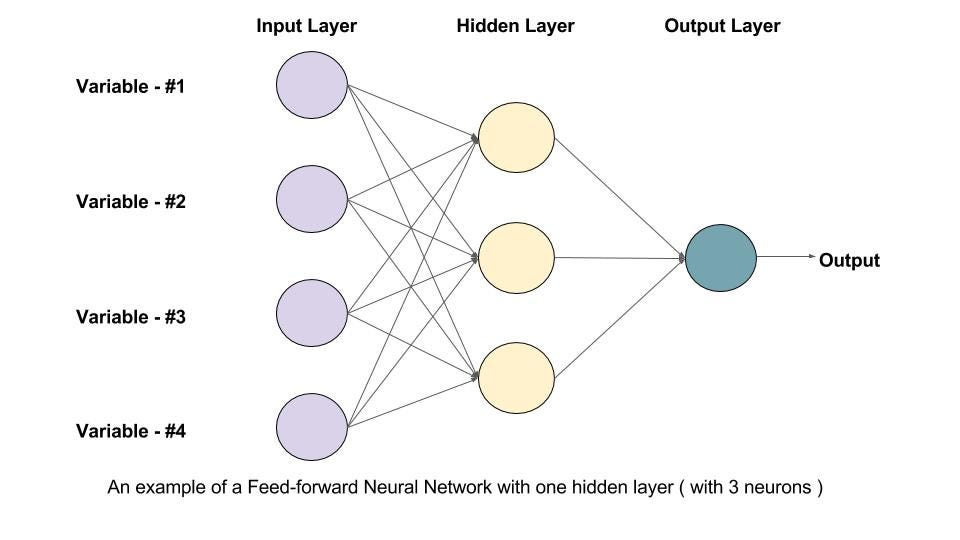

Training Feed Forward Neural Network(FFNN) on GPU — Beginners Guide | by Hargurjeet | MLearning.ai | Medium